UPDATE: It’s no surprise and I assumed that more would be revealed, but another app associated with supposed academic research has been outed as having been an assimilator.

UPDATE: Not everyone has had *that* notification from Facebook yet telling them whether they were assimilated by Cam Anal or not…here’s a workaround, jump to this help page:

https://www.facebook.com/help/1873665312923476

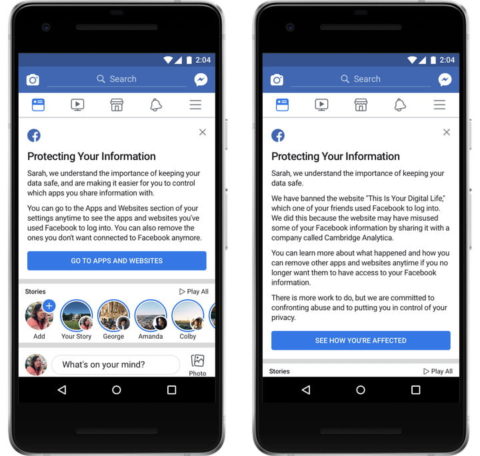

If your Facebook stuff was harvested by Cambridge Analytica because someone you’re connected to used the “mydigitallife” app back before 2014, you should’ve had a notification from FB about it by now. If you haven’t seen a notification, then you it doesn’t necessarily mean you weren’t assimilated by CA or any of the other companies FB has banned since it changed its systems that year. The notifications are still being rolled out. The majority of the billion+ users of Facebook will simply get an advisory notification but some 88 million will presumably get a notice alerting them to their data having been compromised.

A contact on Facebook suggested that:

For most of us, I suspect the stuff we post on FB would just confuse anyone trying to use it

Now, I realise they’re being flippant, but it goes deeper and I think a lot of people do not realise that.

He’s a contact, but we’re not even friends on Facebook and I can see a lot about him: his career history, all of his FB friends, his photos, the names of four of his family members who are on FB (son, cousin, and a couple of others), and a lot more besides. The fact that I can see it means anyone on Facebook can see it too and that could be someone building a profile for whatever reason…ID theft, insurance company, political rival…

I can see where he’s “checked in”, countries visited, railway stations, pubs, everywhere. I can guess which mobile phone company he’s with, because he likes one of the major companies and none of the others. I can see his political persuasion and affiliations and the politics he doesn’t like. I can guess where he likes to have a drink, because he only likes one pub on Facebook.

So, although it all seems trivial…it’s kind of not, a hacker could easily build up enough information to then use social engineering (smooth talk) to speak to a receptionist, operator, bar tender, whoever, to dig deeper, perhaps build up enough info to open a bank account in his name, take out a loan…this is one of the reasons why it’s worrying; political propaganda and fake news notifications that twist democracy aside.

Friend of a friend

As I’ve said though, the current debacle is not even about what you put online…the problem with CA specifically is that an academic created an app that he paid people to use and when they (about 270,000 people) accepted the terms and conditions to use it, the app could then access all of the information that all of their friends had loaded into Facebook. This included all of the stuff that those friends had set as private. They reckon 88 million people were harvested by this app alone.

FB was called out on this issue in 2014 and blocked that app and then seemingly kept quiet about the issue. They changed their software (Graph API) so that other apps couldn’t quite do the same thing after that time. But, even now every time somebody does one of those “easy” quizzes or other app where you login with Facebook and then shares it, the quizz app company gets to peek at a huge mass of their friends’ data.

Fourth Party Data

And, of course, all that data that these third parties have harvested might be stolen by a fourth party…at least at the moment it’s pretty much hidden, but a hacker could break open their servers and post everything to the open web at any time. If we’re lucky, they stored it in encrypted form, but given the recent history of hacking, that’s unlikely, so much data is stolen and released on to the net that never was encrypted.

A new petition is now online seeking a pardon from the UK government for mathematician

A new petition is now online seeking a pardon from the UK government for mathematician